Blog

hadoop-17 Join的多种应用

Reduce Join

- Map端的主要工作,为来自不同表或文件的key/value对,打标签以区别不同来源的记录,然后用连接字段为key,其余部分和新加的标志作为value,最后进行输出。

- Reduce端的主要工作,在Reduce端以连接字段作为key的分组已经完成,我们只需要在每一个分组当中将那些来源不同文件的记录(在Map阶段已经打标志)分开,最后进行合并就ok了

TableBean.java

import org.apache.hadoop.io.Writable;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

public class TableBean implements Writable {

private String id; // 订单id

private String pid; // 商品id

private int amount; // 商品数量

private String pname; // 商品名称

private String flag; // 标记是哪张表 order pd

// 空参构造

public TableBean() {

}

public String getId() {

return id;

}

public void setId(String id) {

this.id = id;

}

public String getPid() {

return pid;

}

public void setPid(String pid) {

this.pid = pid;

}

public int getAmount() {

return amount;

}

public void setAmount(int amount) {

this.amount = amount;

}

public String getPname() {

return pname;

}

public void setPname(String pname) {

this.pname = pname;

}

public String getFlag() {

return flag;

}

public void setFlag(String flag) {

this.flag = flag;

}

// 序列化

@Override

public void write(DataOutput out) throws IOException {

out.writeUTF(id);

out.writeUTF(pid);

out.writeInt(amount);

out.writeUTF(pname);

out.writeUTF(flag);

}

// 反序列化

@Override

public void readFields(DataInput in) throws IOException {

this.id = in.readUTF();

this.pid = in.readUTF();

this.amount = in.readInt();

this.pname = in.readUTF();

this.flag = in.readUTF();

}

@Override

public String toString() {

return id + "\t" + pname + "\t" + amount;

}

}TableMapper.java

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.lib.input.FileSplit;

import java.io.IOException;

public class TableMapper extends Mapper<LongWritable, Text, Text, TableBean> {

private Text outK = new Text();

private TableBean outV = new TableBean();

private String fileName;

@Override

protected void setup(Context context) throws IOException, InterruptedException {

// 初始化 order pd

FileSplit split = (FileSplit) context.getInputSplit();

fileName = split.getPath().getName();

}

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

// 获取一行

String line = value.toString();

// 判断是哪个文件

String[] split = line.split("\t");

if (fileName.contains("order")) {

// 封装kv

outK.set(split[1]);

outV.setId(split[0]);

outV.setPid(split[1]);

outV.setAmount(Integer.parseInt(split[2]));

outV.setPname("");

outV.setFlag("order");

} else {

// 封装kv

outK.set(split[0]);

outV.setPid(split[0]);

outV.setPname(split[1]);

outV.setId("");

outV.setAmount(0);

outV.setFlag("pd");

}

context.write(outK, outV);

}

}TableReducer.java

import org.apache.commons.beanutils.BeanUtils;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

import java.lang.reflect.InvocationTargetException;

import java.util.ArrayList;

public class TableReducer extends Reducer<Text, TableBean, TableBean, NullWritable> {

@Override

protected void reduce(Text key, Iterable<TableBean> values, Context context) throws IOException, InterruptedException {

// 创建集合

ArrayList<TableBean> orderBeans = new ArrayList<>();

TableBean pdBean = new TableBean();

for (TableBean value : values) {

if ("order".equals(value.getFlag())) { // 订单表

// hadoop底层改动了 Iterable values 列表添加 只添加内存地址,并且会覆盖

// 需要使用中间变量 传递对象过去

TableBean tmpTableBean = new TableBean();

try {

// 复制value属性值

BeanUtils.copyProperties(tmpTableBean, value);

} catch (IllegalAccessException | InvocationTargetException e) {

e.printStackTrace();

}

orderBeans.add(tmpTableBean);

} else { // 商品表

try {

BeanUtils.copyProperties(pdBean, value);

} catch (IllegalAccessException | InvocationTargetException e) {

e.printStackTrace();

}

}

}

for (TableBean orderBean : orderBeans) {

orderBean.setPname(pdBean.getPname());

context.write(orderBean, NullWritable.get());

}

}

}TableDriver.java

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

public class TableDriver {

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

// 1.获取job

Configuration conf = new Configuration();

Job job = Job.getInstance(conf);

// 2.设置jar

job.setJarByClass(TableDriver.class);

// 3.关联mapper 和 reducer

job.setMapperClass(TableMapper.class);

job.setReducerClass(TableReducer.class);

// 4.设置mapper输出kv类型

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(TableBean.class);

// 5.设置最终输出kv类型

job.setOutputKeyClass(TableBean.class);

job.setOutputValueClass(NullWritable.class);

// 6.设置输入输出路径

FileInputFormat.setInputPaths(job, new Path("E:\\hadoop\\tableinput"));

FileOutputFormat.setOutputPath(job, new Path("E:\\hadoop\\tableoutput"));

// 7.提交job

boolean result = job.waitForCompletion(true);

System.exit(result ? 0 : 1);

}

}- ReduceJoin是在Reduce阶段完成,Reduce端的处理压力太大,Map阶段的运算负载则很低,资源利用率不高,而且在Reduce阶段极易产生数据倾斜

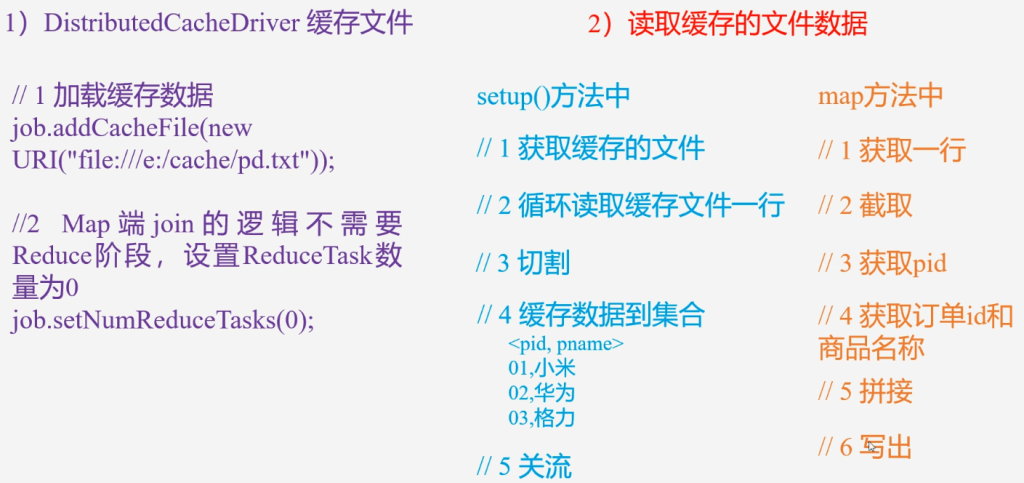

Map Join

适用于一张很小的小表join一张大表

采用DistrubutedCache

- 在Mapper的setup阶段,将文件读取到缓存集合中

- 在Driver驱动类中,加载缓存

//缓存普通文件到Task运行节点

job.addCacheFile(new URI("fille:///e:/cache/pd.txt"));

// 如果是集群运行,需要设置HDFS路径

job.addCacheFile(new URI("hdfs://hadoop102:8020/cache/pd.txt"));

MapJoinMapper.java

import org.apache.commons.lang3.StringUtils;

import org.apache.hadoop.fs.FSDataInputStream;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IOUtils;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.BufferedReader;

import java.io.IOException;

import java.io.InputStreamReader;

import java.net.URI;

import java.nio.charset.StandardCharsets;

import java.util.HashMap;

public class MapJoinMapper extends Mapper<LongWritable, Text, Text, NullWritable> {

private HashMap<String, String> pdMap = new HashMap<>();

private Text outK = new Text();

@Override

protected void setup(Context context) throws IOException, InterruptedException {

// 获取缓存文件,并把文件内容封装到集合

URI[] cacheFiles = context.getCacheFiles();

FileSystem fs = FileSystem.get(context.getConfiguration());

FSDataInputStream fis = fs.open(new Path(cacheFiles[0]));

// 从流中读取数据

BufferedReader reader = new BufferedReader(new InputStreamReader(fis, StandardCharsets.UTF_8));

String line;

while (!StringUtils.isEmpty(line = reader.readLine())) {

// 切割

String[] fields = line.split("\t");

// 赋值

pdMap.put(fields[0], fields[1]);

}

// 关流

IOUtils.closeStream(reader);

}

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

// 处理order.txt

String line = value.toString();

String[] split = line.split("\t");

// 获取pid

String pname = pdMap.get(split[1]);

outK.set(split[0] + "\t" + pname + "\t" + split[2]);

context.write(outK, NullWritable.get());

}

}MapJoinDriver.java

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

import java.net.URI;

import java.net.URISyntaxException;

public class MapJoinDriver {

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException, URISyntaxException {

// 1.获取job

Configuration conf = new Configuration();

Job job = Job.getInstance(conf);

// 2.设置jar

job.setJarByClass(MapJoinDriver.class);

// 3.关联mapper 和 reducer

job.setMapperClass(MapJoinMapper.class);

// 4.设置mapper输出kv类型

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(NullWritable.class);

// 5.设置最终输出kv类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(NullWritable.class);

//取消reduce阶段

job.setNumReduceTasks(0);

// 加载缓存数据

job.addCacheFile(new URI("file:///E:/hadoop/tableinput/pd.txt"));

// 6.设置输入输出路径

FileInputFormat.setInputPaths(job, new Path("E:\\hadoop\\tableinput2"));

FileOutputFormat.setOutputPath(job, new Path("E:\\hadoop\\tableoutput2"));

// 7.提交job

boolean result = job.waitForCompletion(true);

System.exit(result ? 0 : 1);

}

}